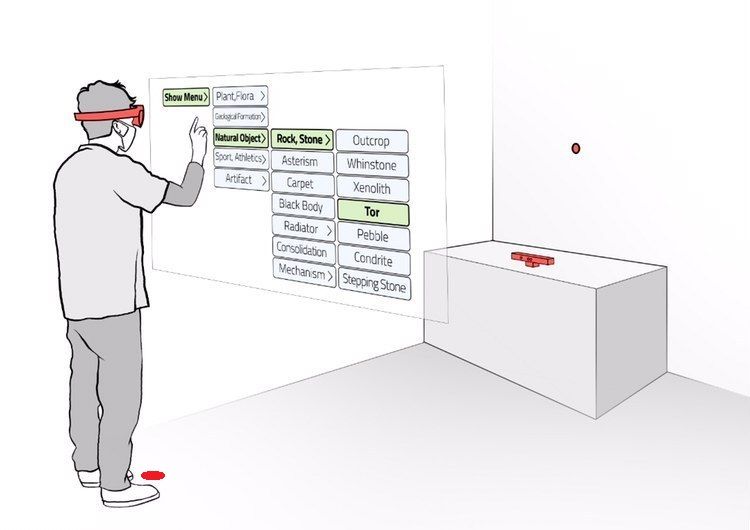

Predicting Human Performance in Vertical Hierarchical Menu Selection in Immersive AR Using Hand-gesture and Head-gaze

The paper titled Predicting Human Performance in Vertical Hierarchical Menu Selection in Immersive AR Using Hand-gesture and Head-gaze authored by Majid Pourmemar, Yashas Joshi and Charalambos Poullis will be presented at the 15th Conference on Human System Interaction, 2022.

TL;DR: User studies are expensive, time-consuming, and primarily based on subjective measures. We present a sequence-to-sequence model for predicting human performance based on objective (consumed endurance, error rate, duration) and subjective (WAIS) measures that can be used in place of a user study. The model is trained on a large lexical database of English words (WordNet).