Simpler is better: Multilevel Abstraction with Graph Convolutional Recurrent Neural Network Cells for Traffic Prediction

The preprint of our paper Simpler is better: Multilevel Abstraction with Graph Convolutional Recurrent Neural Network Cells for Traffic Prediction is available on arxiv.org. The work is co-authored by Naghmeh Shafiee Roudbari, Zachary Patterson, Ursula Eicker, and Charalambos Poullis.

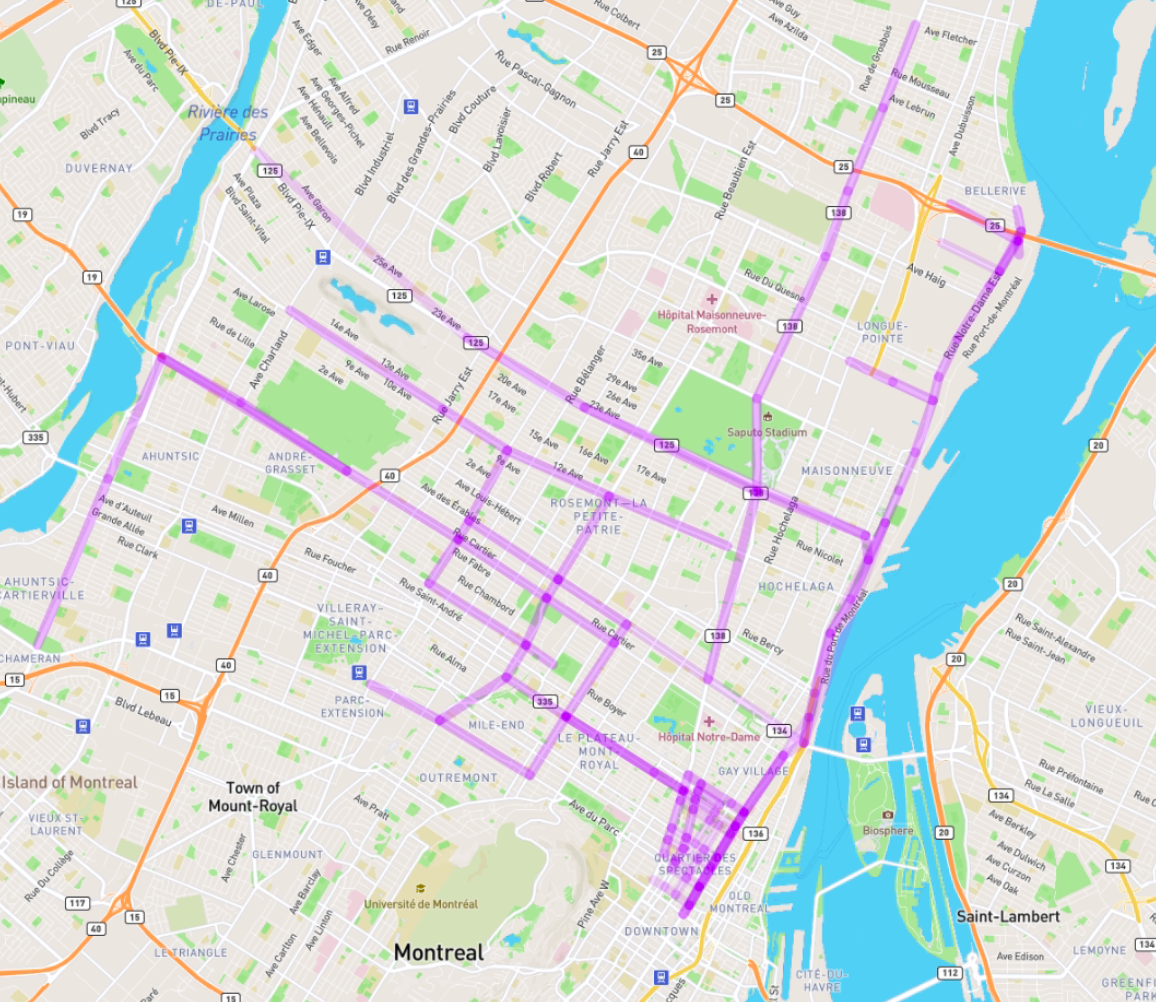

TL;DR: We present a sequence-to-sequence architecture to extract the spatiotemporal correlation at multiple levels of abstraction using GNN-RNN cells with sparse architecture to decrease training time compared to more complex designs. Our method improves performance by more than 7% for one-hour prediction compared to the baseline methods while reducing computing resource requirements by more than half compared to other competing methods. Furthermore, we introduce Montreal street-level traffic dataset (MSLTD), which provides traffic speed over 15-minute intervals can make it publically available: https://github.com/naghm3h/MSLTD